The human face is a remarkable piece of work. The astonishing variety of facial features helps people recognize each other and is crucial to the formation of complex societies. So is the face’s ability to send emotional signals, whether through an involuntary blush or the artifice of a false smile.

People spend much of their waking lives, in the office and the courtroom as well as the bar and the bedroom, reading faces for signs of attraction, hostility, trust and deceit. They also spend plenty of time trying to conceal those signs.

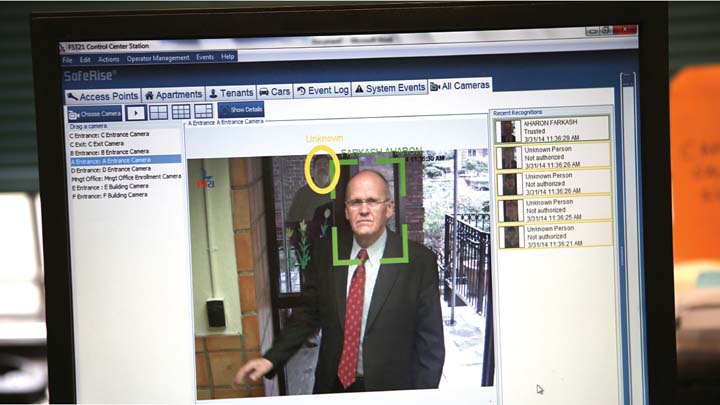

Technology is catching up rapidly with the human ability to read faces. In the United States, facial recognition is used by churches to track worshippers’ attendance; in Britain, by retailers to spot past shoplifters. This year, Welsh police used it to arrest a suspect outside a football game. In China, it verifies the identities of ride-hailing drivers, permits tourists to enter attractions and lets people pay for things with a smile. Apple’s new iPhone is expected to use it to unlock the home screen.

Set against human skills, such applications might seem incremental. Some breakthroughs, like flight or the internet, obviously transform human abilities. Facial recognition seems merely to encode them.

Although faces are peculiar to individuals, they are also public, so technology, at first sight, does not intrude on something that is private. And yet the ability to record, store and analyze images of faces cheaply, quickly and on a vast scale promises one day to bring about fundamental changes to notions of privacy, fairness and trust.

Start with privacy. One big difference between faces and other biometric data, like fingerprints, is that they work at a distance. Anyone with a phone can take a picture for facial-recognition programs to use. FindFace, an app in Russia, compares snaps of strangers with pictures on VKontakte, a social network, and can identify people with a 70% accuracy rate. Facebook’s bank of facial images cannot be scraped by others, but the Silicon Valley giant could obtain pictures of visitors to a car showroom, say, and later use facial recognition to serve them ads for cars.

Even if private companies are unable to join the dots between images and identity, the state often can. China’s government keeps a record of its citizens’ faces; photographs of half of the United States’ adult population are stored in databases that can be used by the FBI.

Law-enforcement agencies now have a powerful weapon in their ability to track criminals, but at enormous potential cost to citizens’ privacy.

The face is not just a nametag. It displays a lot of other information—and machines can read that too.

Again, that promises benefits. Some firms are analyzing faces to provide automated diagnoses of rare genetic conditions, like Hajdu-Cheney syndrome, far earlier than would otherwise be possible. Systems that measure emotion may give autistic people a grasp of social signals they find elusive.

But the technology also threatens. Researchers at Stanford University have demonstrated that, when shown pictures of one gay man and one straight man, the algorithm could attribute their sexuality correctly 81% of the time. Humans managed only 61%. In countries where homosexuality is a crime, software that promises to infer sexuality from a face is an alarming prospect.

Less violent forms of discrimination could also become common. Employers already can act on their prejudices to deny people a job. But facial recognition could make such bias routine, enabling companies to filter all job applications for ethnicity and signs of intelligence and sexuality.

Nightclubs and sports grounds may face pressure to protect people by scanning entrants’ faces for the threat of violence—even though, owing to the nature of machine-learning, all facial-recognition systems inevitably deal in probabilities.

Moreover, such systems may be biased against those who do not have white skin, since algorithms trained on data sets of mostly white faces do not work well with different ethnicities. Such biases have cropped up in automated assessments used to inform courts’ decisions about bail and sentencing.

Eventually, continuous facial recording and gadgets that paint computerized data onto the real world might change the texture of social interactions.

Dissembling helps grease the wheels of daily life. If your partner can spot every suppressed yawn, and your boss every grimace of irritation, marriages and working relationships will be more truthful, but less harmonious. The basis of social interactions might change, too, from a set of commitments founded on trust to calculations of risk and reward derived from the information a computer attaches to someone’s face. Relationships might become more rational, but also more transactional.

In democracies, at least, legislation can help alter the balance of good and bad outcomes. European regulators have embedded a set of principles in forthcoming data-protection regulation, decreeing that biometric information, which would include “faceprints,” belongs to its owner and that its use requires consent—so that, in Europe, unlike the United States, Facebook could not just sell ads to those car-showroom visitors.

Laws against discrimination can be applied to an employer screening candidates’ images. Suppliers of commercial face-recognition systems might submit to audits, to demonstrate that their systems are not propagating bias unintentionally. Companies that use such technologies should be held accountable.

© 2017 Economist Newspaper Ltd., London (September 9). All rights reserved. Reprinted with permission.

Image credits: Ozier Muhammad/The New York Times